The shift from physical to virtual servers has changed the process of designing services and the applications that make up these services. In the physical world, every service is hosted on a fixed set of machines and the applications that make up the service receive a portion of the available compute, network and storage resources allocated to its servers. We design the infrastructure to support a worst-case usage scenario. All the applications are in place before the service can run and any changes in the model require a new engineering design cycle. A hardware upgrade implies a different set of design constraints and a software version change may exceed the ability of the hardware to meet the service’s needs.

Network Function Virtualization breaks open the encapsulation of physical servers to make applications that were buried inside those boxes into a manageable collection of virtual functions (VFs) made up of a series of transparent VF components. This allows us to deploy and manage the VFs in near real-time.

Applications are set free from fixed servers and can be deployed wherever resources are accessible. During peak demand times, a large amount of computer power and network bandwidth can be allocated to a service, and during slack times, a small amount of resources are occupied by an idle application. As hardware capabilities evolve, and software versions change, functions can evolve to use only as much of the total cloud resources as needed at that time. Whenever hardware infrastructure fails, new elements can be allocated from available inventory and brought into service. The goal of ECOMP is to automate this process and make it independent from application logic.

Dynamic management is not simply changing the number of VF components as the user load changes. Each VF must first be deployed and configured as integrated applications and initialized before a service can start. Once in operation, the VFs must be monitored to ensure that all service level performance objectives are being satisfied. A virtual application is a much more organic construct than a physical application because it’s a series of distributed virtual functions operating across a dynamic infrastructure and not isolated inside a box with fixed performance characteristics.

The management of such dynamic applications breaks down into two complementary parts: Orchestration and Control. Orchestration is the service fulfillment and planning function necessary to deploy VF components when service conditions change. Orchestrators manage new orders, environmental and scheduling changes, and other planned changes. Controllers monitor and manage specific service components and adjust their performance based upon unexpected disruptions.

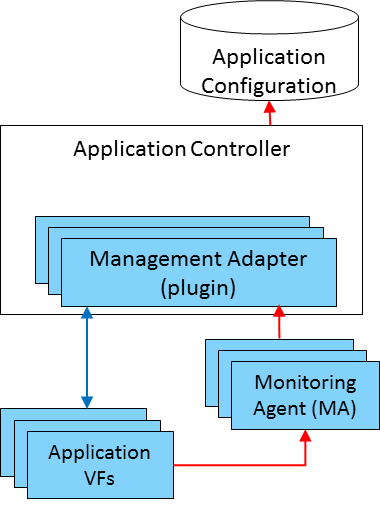

While an orchestrator may be involved in deploying many services, it cannot manage the state of each individual running application. The application controller adjusts data flows between VF components as new components are added or responds to exceptional events, like the sudden loss of an individual component, by altering the configuration of other virtual components to compensate.

In ECOMP, the application control functions are grouped into three parts.

The first is the initialization, configuration and certification of applications during deployment. As new VFs are deployed, the application controller must ensure each component is setup correctly and checked before service requests begin to flow through it.

The second is to monitor and correct failures in application components. The application controller must manage the details of growing and shrinking the number of components as demand changes. It will run regular audits of configuration parameters, self-checks and status queries to ensure the component is behaving correctly. It also services alerts rom other ECOMP functions to accommodate changes in the cloud infrastructure, like bypassing a component that is no longer functioning. If any of these occur, the application controller takes the appropriate action.

And finally, the third is to support commands from other ECOMP elements associated with service lifecycle events, such as policy-driven requests for additional capacity, scheduled configuration changes or version upgrades. The application controller executes these commands in a stateful fashion. It ensures conflicting requests are not serviced simultaneously; requests are ordered properly; and failed requests are rolled back to keep each VF behaving consistently within the larger application.

The ability to flexibly manage applications depends on following several simple guidelines. Applications should use a shared catalog of VFs so management policies can be developed once and applied across many applications. Standardized and open management interfaces, such as Netconf, allow simple monitoring of application state, initialization and application control. Developers should provide a machine readable model such as a Yang or YAML specification of the interfaces used. Resource requirements such as CPU, memory and other performance parameters should be specified as policy rules to assist in automating dynamic behaviors. Modular applications with externally provisioned values, such as IP addresses, allow for simplified control strategies and open transaction interfaces make it easy to generate test transactions.

To read more about ECOMP, click here. We also want to hear your thoughts so send us your feedback.

Kathy Meier-Hellstern – Assistant Vice President in the AT&T Advanced Technology Platforms and Architecture organization

– See more at: http://about.att.com/innovationblog/virtual_functions#sthash.xpzfyNjc.dpuf

PR Archives: Latest, By Company, By Date